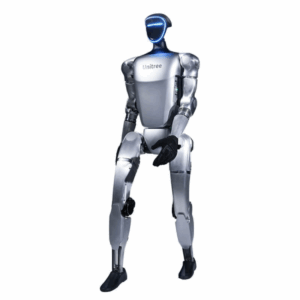

Product Overview

The Unitree G1 EDU U9 is a compact, foldable humanoid that stands approximately 1.32m tall. It is built with high-strength materials and industrial-grade joints, making it a robust platform for testing reinforcement learning, computer vision, and human-robot interaction.

Key Specifications: U9 Professional A

| Feature | Specification |

| Total Degrees of Freedom | 37 DoF (Increased from the base 23) |

| Hands | 2x Dex3-1 Tactile (3-finger dexterous hands) |

| Tactile Sensors | Integrated arrays (33 sensors per hand) |

| Onboard AI Compute | NVIDIA Jetson Orin NX (16GB) – 100 TOPS |

| Knee Torque | 120 N·m (High-torque EDU version) |

| Max Payload | ~3 kg (Arm) |

| Runtime | ~2 Hours (9000 mAh quick-release battery) |

Core Features

-

Tactile Manipulation: Unlike the base EDU models, the U9 is equipped with tactile sensor arrays in the fingers. This allows the robot to “feel” objects, enabling delicate grasping and advanced force-control manipulation that mimics human touch.

-

Advanced AI Integration: The built-in Jetson Orin module provides the 100 TOPS of computing power necessary for running real-time “System 2” reasoning, large language models (LLMs), and complex computer vision algorithms locally on the robot.

-

Kinematic Agility: With 37 active joints (including a multi-DOF waist and specialized wrists), the U9 can perform highly fluid, human-like movements. It features a unique foldable design, collapsing to just 69cm for easy transport.

-

Comprehensive Sensor Suite: It perceives the world via a 3D LiDAR (Livox Mid-360) for 360° mapping and an Intel RealSense D435i depth camera for front-facing visual perception.

Developer & Research Focus

The G1 EDU series is an “open” platform. It supports:

-

Secondary Development: Full access to high-level and low-level SDKs (C++/Python).

-

AI Training: Support for the UnifoLM (Unitree Robot Unified Large Model) and frameworks for imitation and reinforcement learning.

-

Communication: Dual-encoder systems on all joints, WiFi 6, and Bluetooth 5.2 ensure low-latency control and data feedback.

Use Cases

-

Embodied AI Research: Training robots to interact with physical environments using vision and touch.

-

Dextrous Manipulation Labs: Developing algorithms for tool use, assembly, and fine motor skills.

-

STEM & Higher Education: A standardized platform for universities to teach humanoid robotics at a professional scale.

Reviews

There are no reviews yet.